Q&A: When misinformation spreads faster than the virus itself, trusted sources are key

“I’d like to see media be more aggressive about calling out falsehoods,” says Carl Bergstrom, an infectious disease biologist who studies misinformation at the University of Washington. “We’ve got leadership determined to manage this as a public relations crisis versus a public health crisis.”

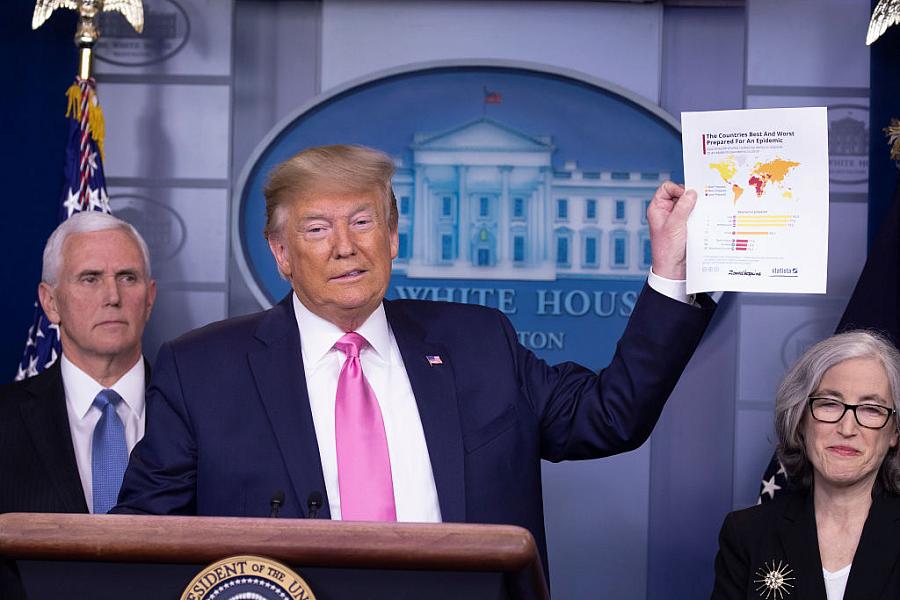

(Photo by Tasos Katopodis/Getty Images)

In an era where the news and social media cycles are spinning faster than ever, it’s very tempting for the public to constantly search for new information, said University of Washington professor Carl Bergstrom, an infectious disease biologist who tracks the spread of misinformation.

But, trusting social media, online rumors, news reports and even official channels without carefully considering the evidence behind their claims can lead to confusion and misinformation— something he’s seen proliferate as coronavirus cases grow.

“We’ve evolved to seek information for situations we consider dangerous,” said Bergstrom, whose book on misinformation — “Calling Bullshit: The Art of Skepticism in a Data-Driven World” — comes out this summer. “But, by trying to get fresher information, you’re degrading the quality.”

Misinformation can even come from reputable sources. And the confusion and uncertainty that follows could hinder attempts to slow the outbreak’s spread, he said. I recently chatted with Bergstrom about the types of coronavirus misinformation he’s tracking, the potential impact on the spread of the virus, and how journalists and the public can better sift through contradictory claims.

Q: With your background in infectious disease and your study of misinformation, this seems like the perfect confluence of topics for you.

A: It’s appropriate in that way. I wrote my Ph.D. on signaling and deception in animals. A decade later, I started working on infectious disease. Then, I started working on information networks. It feels like it’s all been leading up to this. When all of this started happening, I thought I could contribute the most by trying to understand the way information is flowing around the coronavirus: the way reliable agencies are handling risk communications, the way disinformation is being injected and the way misinformation is getting passed along by well-meaning people.

Q: What kind of disinformation and misinformation have you noticed with COVID-19?

A: This was on my radar before the first of the year. The earliest record I can find in my email is dated December 30. This was an alert from ProMED Mail about an undiagnosed pneumonia cluster. It caught my attention because this is the sort of early indicator we might see of a novel flu strain.

By the time these illnesses were linked to a coronavirus in early January, I was paying close attention. Initially, there was a little conspiracy talk, but not a lot. As things heated up in Hubei, we saw both reasonable reporting but also disinformation being pushed on the internet, largely through social media. Early on, you clearly had opponents of the current regime in China pushing various information, some of which didn’t turn out to be wrong (the idea that the virus is a big deal). There were also stories of escaped bioweapons, and people being shot, which were untrue. At the same time, you had some snake oil salesman types: This is going to be a mass pandemic and you should buy my health tonic now. Other story lines: There’s a huge cover up going on in China and the U.S. is playing along, or there’s nothing going on in China but the multinational pharmaceutical companies are trying to scare you. There was a non-peer reviewed paper promoting a bioweapons rumor, suggesting the virus included elements of HIV. Within 48 hours, the paper was retracted.

Here in Washington state, there have been a lot of little pieces of misinformation. When the first U.S. death occurred in the greater Seattle area, there were a lot of questions: Who was this person? There were four separate stories: a 50-year-old man, a 50-year-old woman, a 30-something man, and a 19-year-old man. Those narratives spread on social media.

Q: What do all these instances of misinformation on coronavirus and rumors teach us in aggregate about the nature of misinformation and its spread?

A: It may be a bit early to say we’ve learned a lot of new lessons from this situation, but it certainly has reinforced our previous understanding of the dynamics of misinformation and disinformation.

For example, we see misinformation moving in to fill an uncertainty vacuum. So much is unknown about this virus and how the epidemic will play out. Where reputable sources are unable to provide definitive answers, non-reputable ones fill the gap with rumors. Rumors take off wildly, but corrections rarely spread nearly as well. We see that political polarization where the truth or falsehood of a claim is judged more by who made it than by the evidence in its support.

Q: What drives the spread of false information?

A: Disinformation is deliberate; misinformation doesn’t have underlying intent. A lot of this might be misinformation. We know there’s a death, but health officials haven’t been able to give us more details, so people try to fill them in.

I think most people are relatively well intentioned. The media is looking for people to talk to and give decisive answers and make strong predictions. If you’re willing to get up there and say definitive things, that explodes across social media. You get invited to talk on CNN. If you ask any reputable public health epidemiologist, “How many people is this going to kill?” they’ll say, “I have absolutely no idea.” That scientific uncertainty is unsatisfying. When you have people willing to forgo that caution – who are willing to say exactly 3.8% of those infected – those people are particularly attractive to put on TV or social media posts.

What’s been really striking for me over the past couple weeks has been watching the bungled risk communications coming out of U.S. government. A couple of weeks ago, you have the executive branch promoting the idea that there is “absolutely no problem” while you had people high up in the CDC saying this is a serious concern and it’s likely to disrupt schools, work, travel. You have multiple contradictory messages coming out, which is extremely damaging.

Then you get people like Elon Musk saying the panic around the coronavirus is dumb. People take that seriously. We see this increasing trend toward what people describe as tribal epistemology, where people believe information that comes from people with whom they’re aligned.

Q: What is the impact of contradictory information on the spread of the disease?

A: As you’re getting hit with all of this stuff on all ends of the spectrum, you’re thinking: “I can’t trust anyone. I can’t believe anyone.”

In a case like this, where there are no vaccines or effective antivirals yet, your real strength in combating the spread are things like hand washing, social distancing, targeted closing of schools, cancelling events, and restrictions on travel. But if people don’t trust the authorities who are trying to implement these steps, it undermines these efforts.

It’s critical that people recognize the seriousness of what we’re facing as a country and we don’t fall prey to false assurances such as, “It’s all going to go away in April when it warms up,” or “It’s no worse than seasonal flu.” If people believe it’s all a hoax or no worse than the flu, that discourages them from taking the steps we need to be taking as a community to shape the trajectory of the epidemic.

If this explodes, it’s a pretty serious disease for a non-trivial fraction of the people who get it. Imagine one in five people needing critical care. You’re going to quickly overwhelm the health system.

Q: Given all this misinformation, what can people do?

A: The main thing people can do is to identify trusted sources and stick to them. For me, there are a handful of public health professionals and health reporters like STAT’s Helen Branswell who have a reputation for great integrity and good judgement. If you hear some rumor that sounds dramatic, withhold judgment until you hear from someone you trust. You could do well by turning to fact-based media sources. I’m going to trust the Washington Post, but I’m not going to trust Russia Today.

Q: What role do journalists have in weeding out the misinformation? And how might they handle what they perceive as misinformation from official sources?

A: I would like to see journalists be a little harder hitting about this. I’d like to see someone say, “Trump says there are only 15 cases,” followed by, “Experts estimate 1,000 to 10,000 cases are currently circulating.” I’d like to see media be more aggressive about calling out falsehoods. We’ve got leadership determined to manage this as a public relations crisis versus a public health crisis. In a public relations crisis, you want to centralize and control communications and be careful about what info gets out.

Q: Do you think the coronavirus is being overhyped?

A: I think it’s a very serious situation. I have seen claims that make it sound worse than it is. I’ve seen a lot more claims saying it’s less severe than it is. The media and the state and local health authorities are starting to get things better calibrated in terms of the severity. It makes a huge difference if social distancing is enough to start to reduce the spread of the epidemic or whether it takes more draconian measures, as China did in Wuhan.